On May 6, the Government of Canada announced that its investigation into OpenAI and its product ChatGPT found that the company was not compliant with Canadian privacy laws. ChatGPT has violated privacy laws through its machine-learning program and its consistent training using public prompts. The AI model violated Canada's requirement for implied consent by not revealing the extent to which it uses users' data to train itself. According to the Privacy Commissioner of Canada, Philippe Dufresne, ChatGPT is now compliant with Federal standards, but he stressed in a news conference that companies need to be compliant before releasing their products, not two years after.

A two-year investigation

The investigation into ChatGPT's privacy breaches began in April 2023, through the Office of the Privacy Commissioner of Canada and its counterparts in Quebec, British Columbia and Alberta. The investigation began after Canadian privacy watchdogs learned that ChatGPT may be scraping information from Canadians without warning them. While the application did have in-depth disclosures, none were forward-facing, and none were

Phillippe Dufresne was joined by BC's Information and Privacy Commissioner, Michael Harvey. According to Dufresne, OpenAI has now ‘conditionally resolved' the issue by taking steps to limit the scope of the information it gathers from users. He stressed that the problem is that ChatGPT has been non-compliant for more than two years.

“We found that there was a lack of accountability.”

According to Defresne, OpenAI leaders made statements claiming that they ‘rushed' ChatGPT into Canadian markets after threats of other AI models became clear. Dufresne claimed that the AI leaders told him that ChatGPT was released to Canadian markets with ‘limited testing,' meaning the model had to be trained on the fly using prompts and conversations from Canadian users.

“We found that there were things they should have done, and now have done that should have taken place before this was launched. This is the message to organizations, that you have to do this beforehand.”

While the investigation began in April 2023, ChatGPT was launched in Canada in November 2022, meaning the application was active and scraping Canadians' data for at least four months before the Canadian government opened its probe. The government did not undertake a formal, joint probe until May 2023.

What data was scraped

According to the report from Canadian privacy watchdogs, ChatGPT was scraping a wide array of data from Canadians. At a base level, ChatGPT collected information from conversations with Canadians. That data could include health conditions, political views, personal opinions, and specifically, information about the user's children. ChatGPT uses your conversations to build a ‘profile' in order to customize the user experience. This means ChatGPT wants to know who you are in order to best respond to users' individual requests. The probe also found that OpenAI was collecting personal information from ‘publicly accessible sources' like social media, chat rooms, and other similar sites. ChatGPT could research the user in order to best create a profile for each individual user. ChatGPT was not scraping data from computer hard drives or from illegal sources, where it violated Canada's privacy laws, which was due to its lack of disclosure.

What laws did it break, and how has it changed

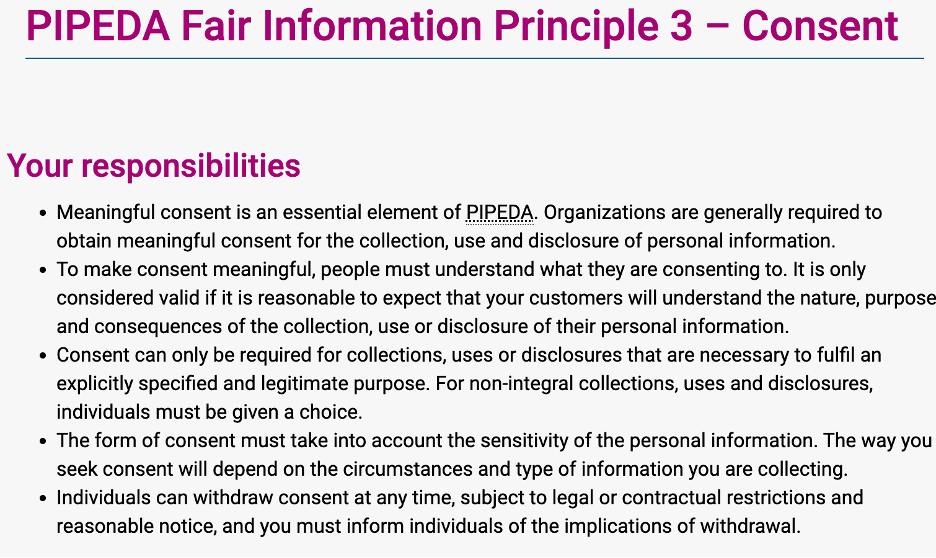

In a world where individuals' data is mined like gold, the Canadian government aims to mitigate the level of data collection that companies can use. The government does so with the Personal Information Protection and Electronic Documents Act (PIPEDA). In section three of the PIPEDA, the government outlines in plain English the responsibilities of tech companies, how to fulfill said responsibilities, and tips for reaching the standard.

The PIPEDA makes it clear when companies must obtain express consent instead of implied consent. According to the Act, organizations must receive express consent when collecting ‘sensitive' (personal) information, when the information collected falls outside the ‘reasonable expectations of the individual', or when the information collected could pose a risk to the individual. It's not difficult to see how ChatGPT violated these agreements. Information about health conditions and children is certainly ‘sensitive', and while many Canadians assumed ChatGPT was ‘learning' from their conversations, the extent to which ChatGPT scraped data is unreasonable according to the Canadian government.

Express consent vs Implied consent

Express consent is clearly stated through an agreement that must be signed before a user partakes in a service. Express consent is often achieved through pop-ups that block a user from accessing a service until consent is given. Implied consent is trickier, with the government relying on the idea that Canadians should have a general knowledge of how data collection works. For example, when you sign up for an email newsletter, you are giving implied consent to that organization to use your email address for communications and data-sharing. With privacy regarding social media and AI dominating tech conversations around the world, and multiple countries implementing age restrictions or social media bans, the probe from the OPCC is most likely just the beginning of Canada's battle for privacy.